The mongo Shell and the MongoDB Node.JS Driver both provide a way to interact with a Mongo database. There are fairly significant differences in how they work, however, as well as the benefits they provide.

The mongo Shell and the MongoDB Node.JS Driver both provide a way to interact with a Mongo database. There are fairly significant differences in how they work, however, as well as the benefits they provide.

There are multiple ways to interact with MongoDB, and two of those are with the mongo shell and the MongoDB Node.js driver. Now at this point it might make sense to ask which approach is best. Well, the answer really depends on the scenario. So, perhaps the first question should be: “What is it that I need to do?” Once that question is answered, you can determine which tool is best suited for the task. In this article, I’ll demonstrate the differences between the mongo shell and the MongoDB Node.js driver when performing basic CRUD operations. My hope is that this will help you to decide which approach works best for what you need to do.

The mongo shell is an interactive JavaScript interface to MongoD, and it is a component of the MongoDB package. The mongo shell can be used to perform CRUD operations on data, as well as administrative operations. In other words, think of the mongo shell as a way to interact with a MongoDB database without the need to build or interact with an application.

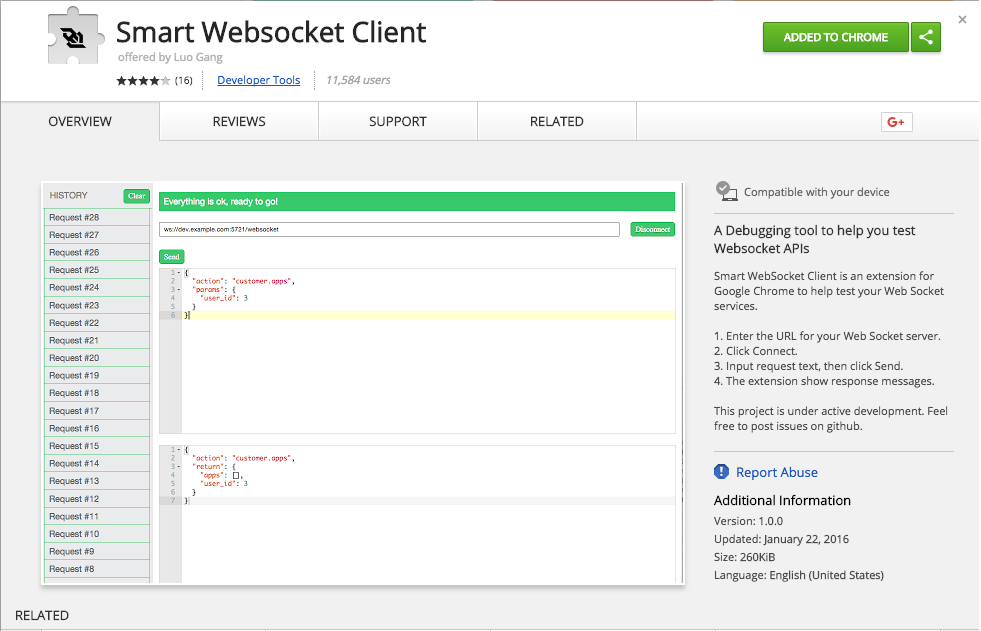

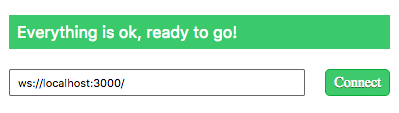

The MongoDB Node.js driver provides a way to interact with a MongoDB database from your Node application code. It supports both callback-based and Promise-based interaction with your mongo database. This would be the opposite of the mongo shell, which is meant to be used in your Node.js application code.

Inserting One Document Into the Database

Insert One Document with the Mongo Shell – Example # 1A

Insert One Document with the MongoDB Node.JS Driver – Example # 1B

With the mongo shell, we need to specify which database we want to use. We do this by using the “use” command. The syntax is: “use DATABASE_NAME”. So, In Example # 1A, we accomplish two things; we select the madMen database with the user command (i.e. “use madMen”), and then we insert one document into the names collection. Actually, a third step was taken here, although you may not have noticed because it was not explicit; i.e., the names collection was created. With the mongo shell, if we reference a collection that does not already exist when using the insert command, then that collection is created. Note that when we inserted the document, we passed an object to the insert method. This object can have one or more key/value pairs. In this case, we provided that one key/value pair.

You’ll notice that in Example # 1B, an all of the following MongoDB Node.JS Driver examples, there is more code. The reason for this is that there this is application code, so there are some setup steps needed in order to provide dependencies to our application and tell it what we want to do. With the mongo Shell, there is context. That is to say, the mongo Shell understands that you will be working on performing MongoDB-specific tasks, so there is no need to provide dependencies or explain much.

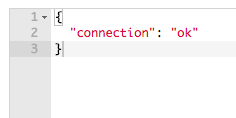

Now here in Example # 1B, we accomplish the same tasks using the MongoDB Node.JS Driver. The first five lines of code provide dependencies and some configuration information. And on line # 8, we establish a connection to the madMen database using the mongoDbClient.connect() method. This method takes a callback, and inside the callback we set references to the madMen database and the names collection. We then use the insert method of the names collection to insert one document. We also add some console.log() statements, just to provide some helpful message so that we can see that the operation was successful. So far, so good.

Inserting Multiple Documents Into the Database

Insert Multiple Documents with the Mongo Shell – Example # 2A

Insert Multiple Documents with the MongoDB Node.JS Driver – Example # 2B

In Example # 2A we insert multiple documents Into the madMen database using the mongo Shell, and we do this in two ways. First, we insert the new documents one at a time. There is no need for a for-loop as this is not application code; since we are in the mongo Shell, we can simply run each command manually. Then, we insert three new documents by using the insertMany method. Now, the difference between the insert and insertMany methods is that with the insert method, you pass one document object as an argument, whereas with the insertMany() method, you provide an array of document objects.

In Example # 2B we insert multiple documents into the madMen database, using the MongoDB Node.JS Driver. The difference between this code and the code found in Example # 2A is that instead of only passing an array of objects to the collection.insertMany() method, we also provide a callback as the second argument. The callback is not required, but it is likely that you will want to provide it because the collection.insertMany() method is asynchronous and you will likely want to act upon the successful insertion of the documents. So, in this example, we’ve shown a couple of console.log() messages to indicate that the database insert was a success. But more importantly, we’ve called the database.close() method, which as you might expect, closed the database. The main thing to keep in mind about leveraging the collection.insertMany() method in your Node application is that it is an asynchronous action, as is often the case in Node.

Viewing All Documents in the Database

View All Documents with the Mongo Shell – Example # 3A

View All Documents with the MongoDB Node.JS Driver – Example # 3B

In Example # 3A, we use the mongo Shell to view all records in the database by simply executing the command: db.names.find(). If we were executing a script file in the shell, we’d need to set a reference to all records, set up a loop, and then in each iteration of the loop we could output the current record over which we are iterating. But because the mongo Shell provides REPL functionality, we can simply execute an expression that results in a value representing every record in the database.

In Example # 3B, we use the MongoDB Node.JS Driver to view all of the records in the database, and here, we need to roll up our sleeves, because we have a little more work to do. Now once again, this is because this is application code, so we need to explain to Node exactly what we want to do. So, if you’ll take a look at line # 11, you’ll see that we use the find() method to obtain a reference to all records in the database. We then chain the each() method to the return value of this, passing it a callback. In the callback, the second argument is the current document over which we are iterating, so we log that document. If the current document is null, then we close the database connection.

Deleting a Single Document

Dele a Single Document with the Mongo Shell – Example # 4A

Delete a Single Document with the MongoDB Node.JS Driver – Example # 4B

In Example # 3A, we use the mongo Shell to remove one document at a time. Notice that we reference a specific document by providing the key: “_id”, and the ID of the document we wish to remove. But we don’t provide the ID simply as a string; we pass a call to the ObjectId function, and then pass the document ID to that function. The reason for this is that MongoDB prefers the wrapper function that converts that string ID to an object.

In Example # 3B, we use the MongoDB Node.JS Driver to remove one document from the database. Now the main difference here is that we use the deleteOne() method, instead of the remove() method. And similar to the mongo Shell approach, we provide an object that uniquely identifies the document we want to remove. This action returns a promise, so we can chain the then() method to its return value and inside the callback, we close the database (line # 19).

Deleting All Documents

Delete All Documents with the Mongo Shell – Example # 5A

Delete All Documents with the MongoDB Node.JS Driver – Example # 5B

In Example # 5A, we use the mongo Shell to remove all documents from the database. Now this is a fairly simple task because we provided an empty object to the remove() method. This indicates to MongoDB that we want to remove all documents.

Example # 5B is somewhat similar. Using the MongoDB Node.JS Driver, we remove all documents in the database by calling the deleteMany() method (as opposed to the “remove()” method). And in a similar fashion, we provide an empty object that signals to MongoDB that we want to remove all documents from the database. Once again, this action returns a promise, so we chain the then() method, passing a callback, and inside of that callback, we close the database.

Summary

In this article, we walked through a comparison accomplishing basic CRUD operations with both the mongo Shell and the MongoDB Node.JS Driver. In each example, we saw that there is a fairly significant difference in the syntax and in some cases, the method names. The main reason for the differences is that the mongo Shell is a REPL environment; i.e., all actions are synchronous, and the shell understands that we are working with MongoDB databases. The MongoDB Node.JS Driver generally requires more work, because our Node application is vanilla JavaScript, and is not necessarily hosted in a MongoDB-specific environment. So, in this case, we need to establish a database connection, set a reference to the MongoDB client, and set references to the database and collection.

Now, as to which approach works best, it really depends on your needs. Both the mongo Shell and MongoDB Node.JS Driver provide significant power for your work with your MongoDB database. The difference is that the mongo Shell is a terminal-based REPL environment and the commands will tend to be simpler. On the other hand, the MongoDB Node.JS driver provides a way to interact with MongoDB from your Node.js code. So, in this case, you’ll need to take a more low-level approach and write code that takes care of connecting to and from the database, as well as your business logic. But while this will usually require more effort, there is great power in that you are writing application code that can have complex logic and be executed repeatedly.

for-in and for-of both provide a way to iterate over an object or array. The difference between them is: for-in provides access to the object keys ,

for-in and for-of both provide a way to iterate over an object or array. The difference between them is: for-in provides access to the object keys , Learn how to access the body of an HTTP POST request using the Express.js framework and body-parser module.

Learn how to access the body of an HTTP POST request using the Express.js framework and body-parser module.

Are you getting an ECMA-Headache?

Are you getting an ECMA-Headache?

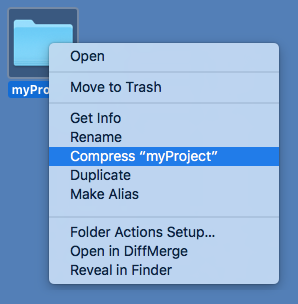

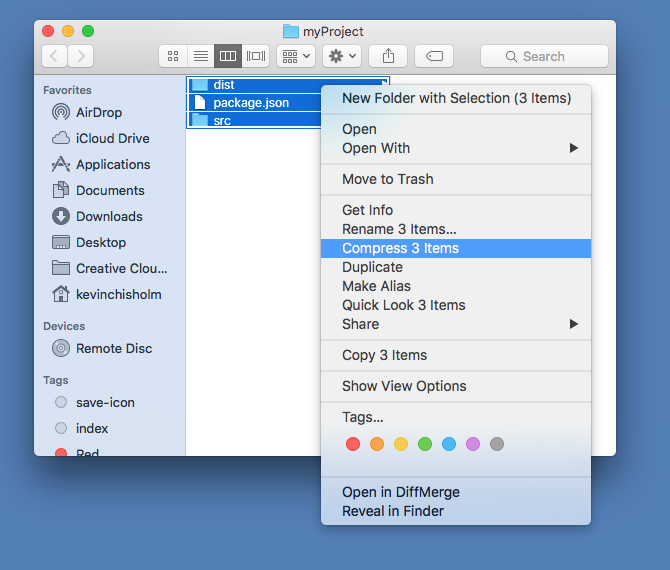

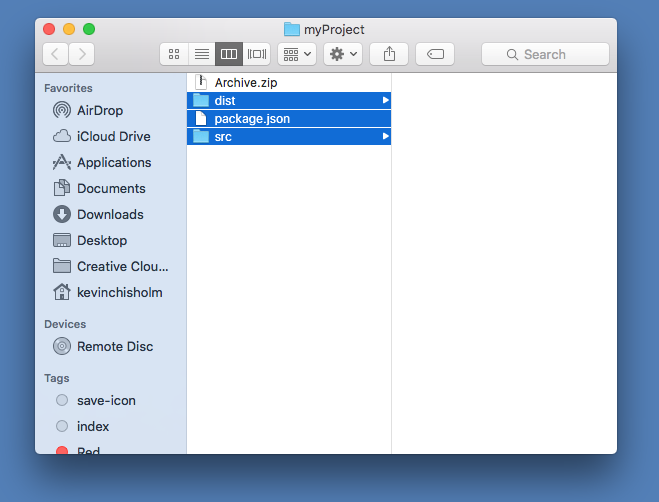

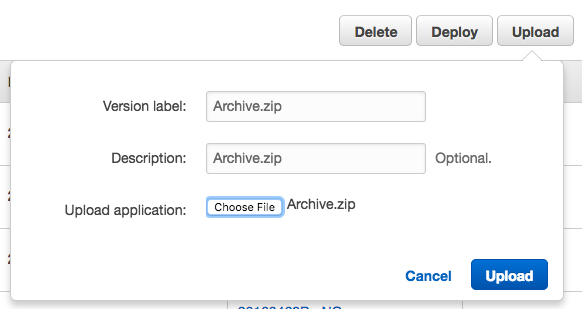

AWS’s Node deployment keeps telling me that it cannot find package.json, but it’s there! – Fortunately, this problem is easily solved.

AWS’s Node deployment keeps telling me that it cannot find package.json, but it’s there! – Fortunately, this problem is easily solved.

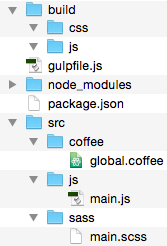

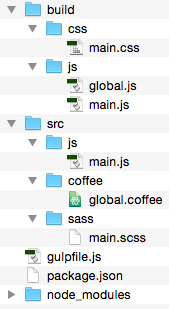

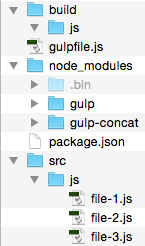

Learn how to automate your front-end build process using this streaming build system

Learn how to automate your front-end build process using this streaming build system

Like it or not, ECMAScript 6 is coming soon to a browser near you (and what’s not to like about that?). Learning about new additions to the specification is only a few clicks away.

Like it or not, ECMAScript 6 is coming soon to a browser near you (and what’s not to like about that?). Learning about new additions to the specification is only a few clicks away.